Week 5: Bag of Words Naive Bayes Exercise

Chris Tralie

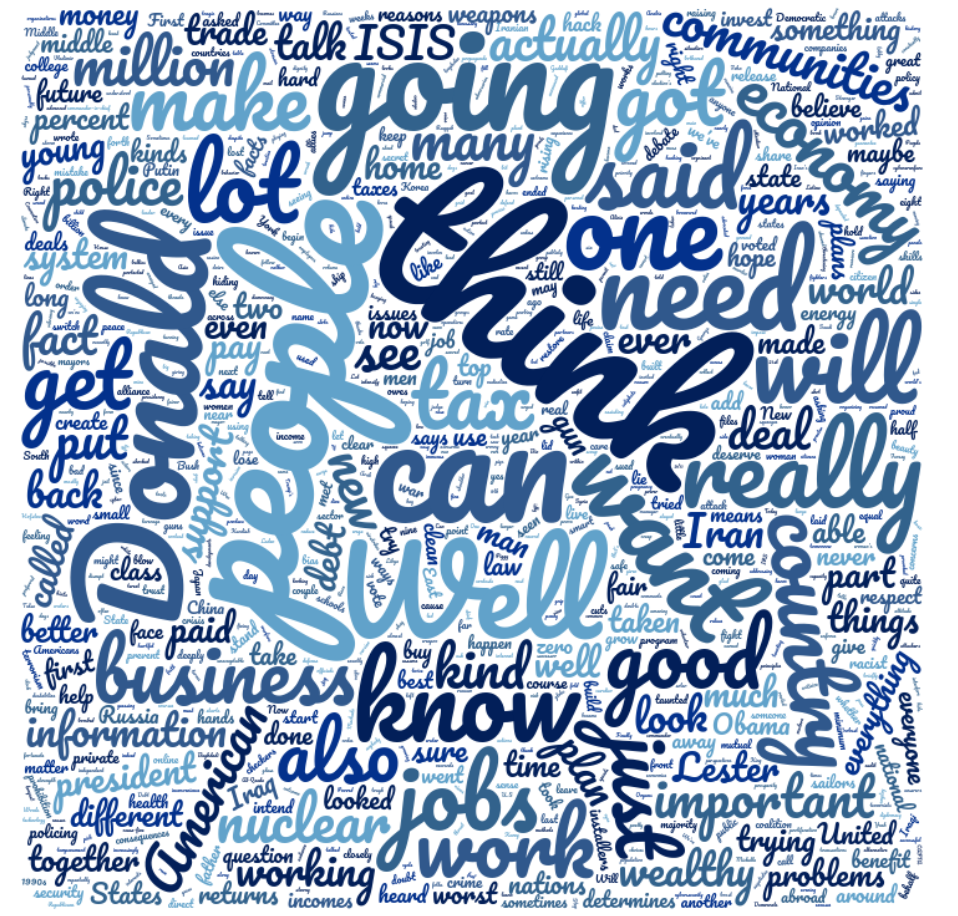

In homework 3, you've been modeling text as a sequence using Markov chains. We're going to do something seemingly much dumber and completely forget the sequence of words and focus just on how often words occur across entire documents. For example, from the first 2016 debate transcript on the homework, you get the following "bag of words" for Clinton (courtesy of wordclouds.com)

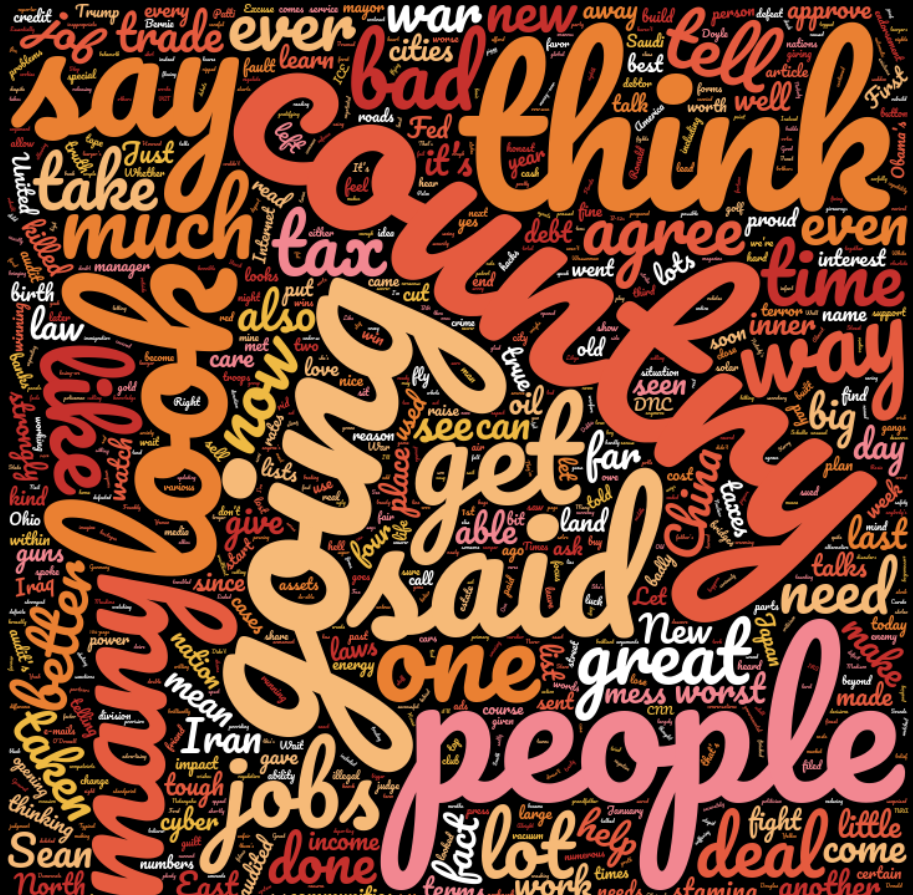

and the following for Trump

We can estimate the probability of a single word as simply the number of times that word was said divided by the total number of words, which will be proportional to the size of the words in the above word clouds. For example, Clinton said 6257 words total, and she said "Donald" 20 times, so her probabability of saying Donald would be estimated as

\[ 20/6257 \]

by contrast, Trump only says "Donald" once out of 8367 words, so his probability would be

\[ 1/8367 \]

Your task will be to estimate the probabilities for all words from data and assume complete independence, as per the naive bayes assumption, and multiply them together to get a total probability for both Trump and Clinton of each phrase, for each model. For example, if you got the text "Donald job job Donald Donald", and given that Trump said "job" 6/8367 words, we'd compute the likelihood of the Trump model as

\[ (1/8367) \times (6/8367) \times (6/8367) \times (1/8367) \times (1/8367) \]

You'd then also want to compute such a probability using the Clinton model, and choose the model with the highest probability as your estimate for who said what. You should try to classify all of the Trump and Clinton quotes from the third debate this way and record how many of your guesses are correct. As you go along, we will talk about some pitfalls, and after class, we will dig into some more theory about why this is actually correct and what the "Naive Bayes Model" is precisely

Tweaks To The Technique

Here are a few things to think about as you go:

- What's the problem with multiplying a lot of probabilities together? how would you fix this?

- What happens if we never saw a particular word in our "training data"? What should we do about that?

Programming tips

Here's some code to load the debate text for Clinton

Here's a slick way to loop through all of the test data (the third debate) for Clinton

To get a list of words in a string, use the split() method of the string class. For example,

would yield

Solution

Below is some code I wrote to implement this on the 2016 debate text. There were two problems that needed to be addressed which people pointed out in class:

- To prevent numerical underflow by multiplying tons of small numbers, I summed the logs of probabilities instead of multiplying them directly. The log is a monotonic function, so it preserves maxes, and this won't change which model wins.

- If we see a word when we're classifying a new document that wasn't in the training model, it will have a probability of 0 by default. But this will completely kill off the probability of the whole thing when multiplied through. So we need to do something more intelligent. We opt to do something called additive smoothing, in which each unique word has an extra count added to it. This means we also have to add to the denominator the unique number of words so that the probabilities still sum to 1. If we see a word that wasn't in the training data, we simply assign a 1/denominator to it

Below is the code. If you run it, you'll see that it's lightning fast! This is one of the advantages of Naive Bayes, even in situations where it doesn't work as well as other supervised learning techniques